Microsoft launched Phi-3 earlier this week on HuggingFace, Ollama and the Azure AI catalog. While it does not quite match the general knowledge skills of Windows Copilot, the open-source AI technology represents the fourth generation of small language models from Redmond that rival mainstream LLMs in speed, efficiency and performance.

At 3.8 billion parameters, Phi-3 is slightly larger than its predecessor but remains small enough to run on as little as 1.8GB of mobile storage. For comparison, a typical complex LLM such as Llama or GPT-3.5 utilizes hundreds of billions of parameters to comprehend input and is impractical to store natively. GPT-5, launching this summer, is expected to be trillions of parameters in size. By conventional scaling laws, more parameters means more intelligent results. But according to Microsoft, this might not necessarily be the case.

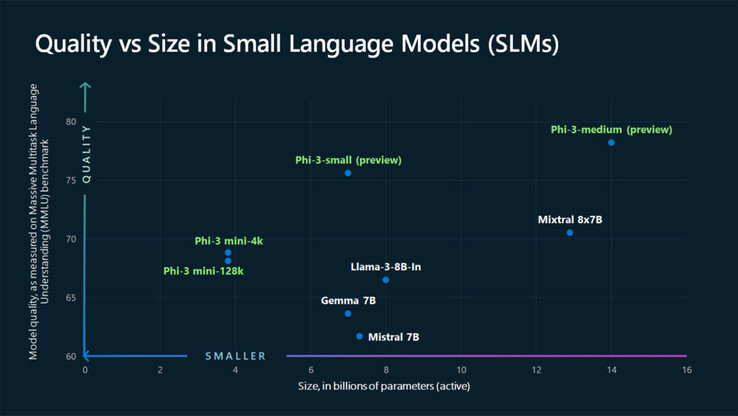

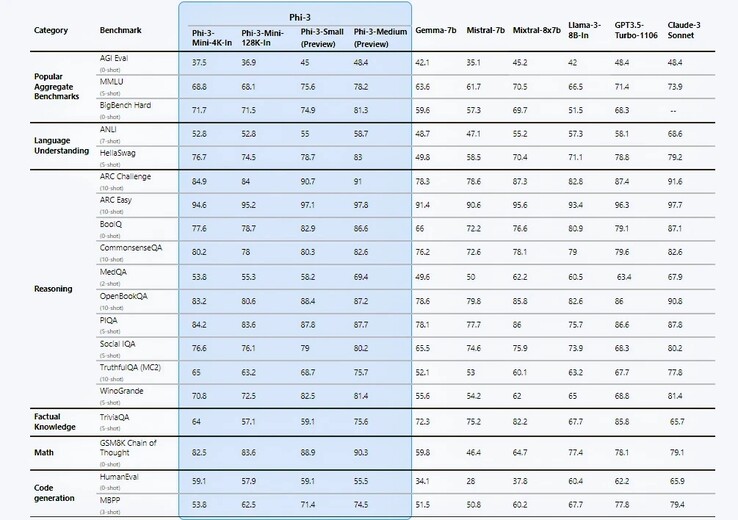

Microsoft makes some bold claims in its technical report; chief among them being the performance benchmarks which are, by the company's own admission, purely academic. In 12 out of 19 benchmark tests, Phi-3-mini appears to outperform Llama-3-instruct despite running on more than twice as many parameters. With the 7B Phi-3-small, and 14B Phi-3-medium, the results were even more staggering.

The engineers attribute these efficiency gains to their carefully curated training dataset derived from two sources: ‘textbook-quality’ web content and AI-generated data designed to teach language, general knowledge and common sense reasoning with a cherry-picked list of 3000 words serving as building blocks. Microsoft’s researchers claim that this sort of data recipe enabled last year's Phi-2 to match the performance of Meta's considerably larger (70 B) Llama-2 model.

Eric Boyd, corporate VP of Azure AI, boasts through The Verge that Phi-3 is just as capable as GPT-3.5, albeit in a “smaller form factor”. However, Phi-3 continues to be plagued by a deficiency in factual knowledge due to its limited size. Perhaps this a necessary trade-off for AI to run natively instead of via cloud computing?

Considering how flexibility and cost-efficiency are key issues for businesses, it is not surprising that companies have already begun to harness the capabilities of SLMs. However, Phi-3 has keen competition. Meta's Llama-3, Anthropic’s Claude-3 suite, Google Gemini and Gemma all have lightweight versions that are capable of supporting edge computing on mobile. And although Phi-3 seems to compete favorably, Gemini Nano has already made it to devices like the Google Pixel 8 Pro and Samsung Galaxy S24 series ($784 on Amazon).

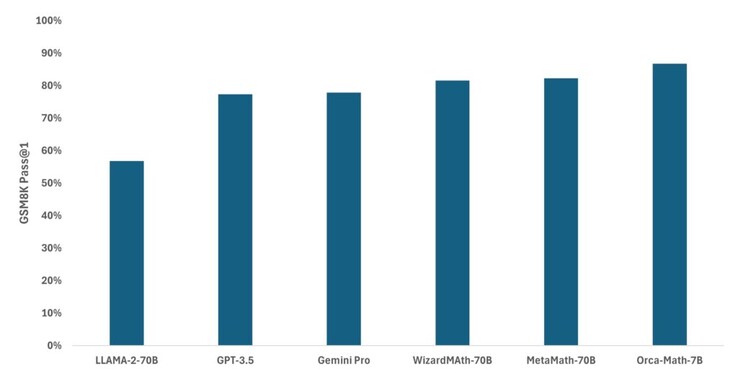

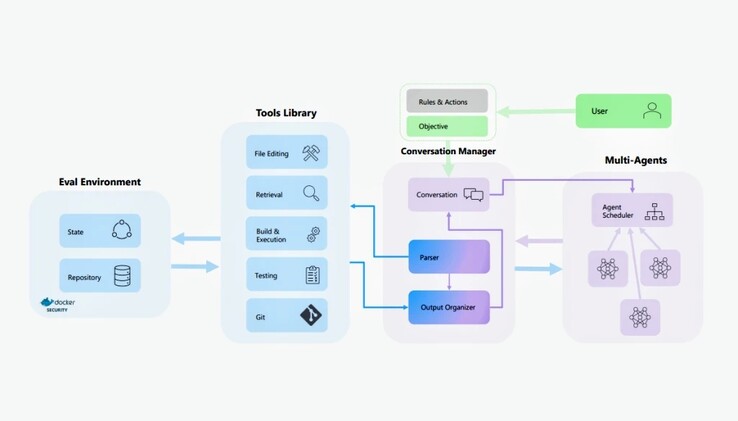

The Phi-3 family of AI models is by no means the only SLM Microsoft has been working on. Last month, the company adapted Mistral to create Orca-Math, a specialized model that was shown to be considerably more accurate than Llama, GPT-3.5 and Gemini Pro at grade school math. AutoDev, a more recent project, draws upon AutoGen and Auto-GPT to autonomously plan and execute programming tasks based on user-defined objectives. The AI wars are far from over, but at least on the lower scale, we have a leading contender.

Deutsch

Deutsch English

English Español

Español Français

Français Italiano

Italiano Nederlands

Nederlands Polski

Polski Português

Português Русский

Русский Türkçe

Türkçe Svenska

Svenska Chinese

Chinese Magyar

Magyar